Harnessing Layered Graphic Designs with Real Intentions for Text-to-Design Generation

Abstract

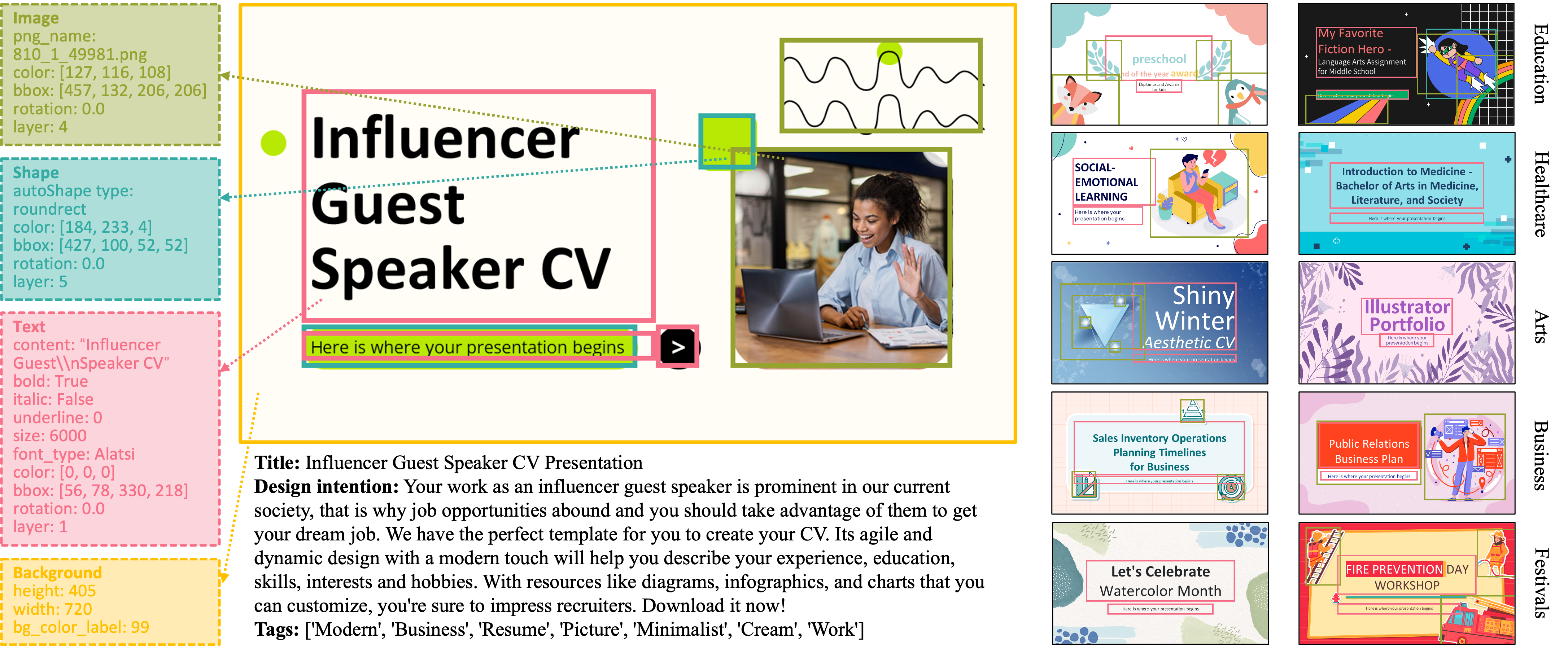

Text-to-design generation, which synthesizes plausible and diverse graphic designs from textual design intentions, has recently emerged as a research area that gains growing interest. However, further process in this area is hindered by the absence of public, high-quality paired intention-design data. To mitigate this issue, we introduce LADEREIN, a new benchmark of layered designs with real intentions for training and evaluating text-conditioned graphic design generation models. As opposed to synthetic short intention prompts in prior datasets, the intentions of our dataset are real, long and complex, spanning various factors such as design purpose, visual style, feeling, and expected audience behavior. Such real intentions allow us to train text-to-design models that generalize to real design scenarios, and make our dataset a promising ground for validating progress in text-to-design generation. For objective assessment of model performance in intention-following, we contribute, DesignCLIP, a new text-design alignment metric learned from our dataset. Moreover, we build a simple yet effective text-to-design generator, DesignDiff, as a baseline on our benchmark. We show that: 1) our DesignCLIP outperforms GPT-4V in judging the alignment of graphic designs and textual design intentions; 2) our dataset enhances the capabilities of text-to-design models in following complex user intentions accurately; 3) our DesignDiff is able to generate high-quality designs of great text alignment.